BsoBird opened a new issue, #7281: URL: https://github.com/apache/iceberg/issues/7281

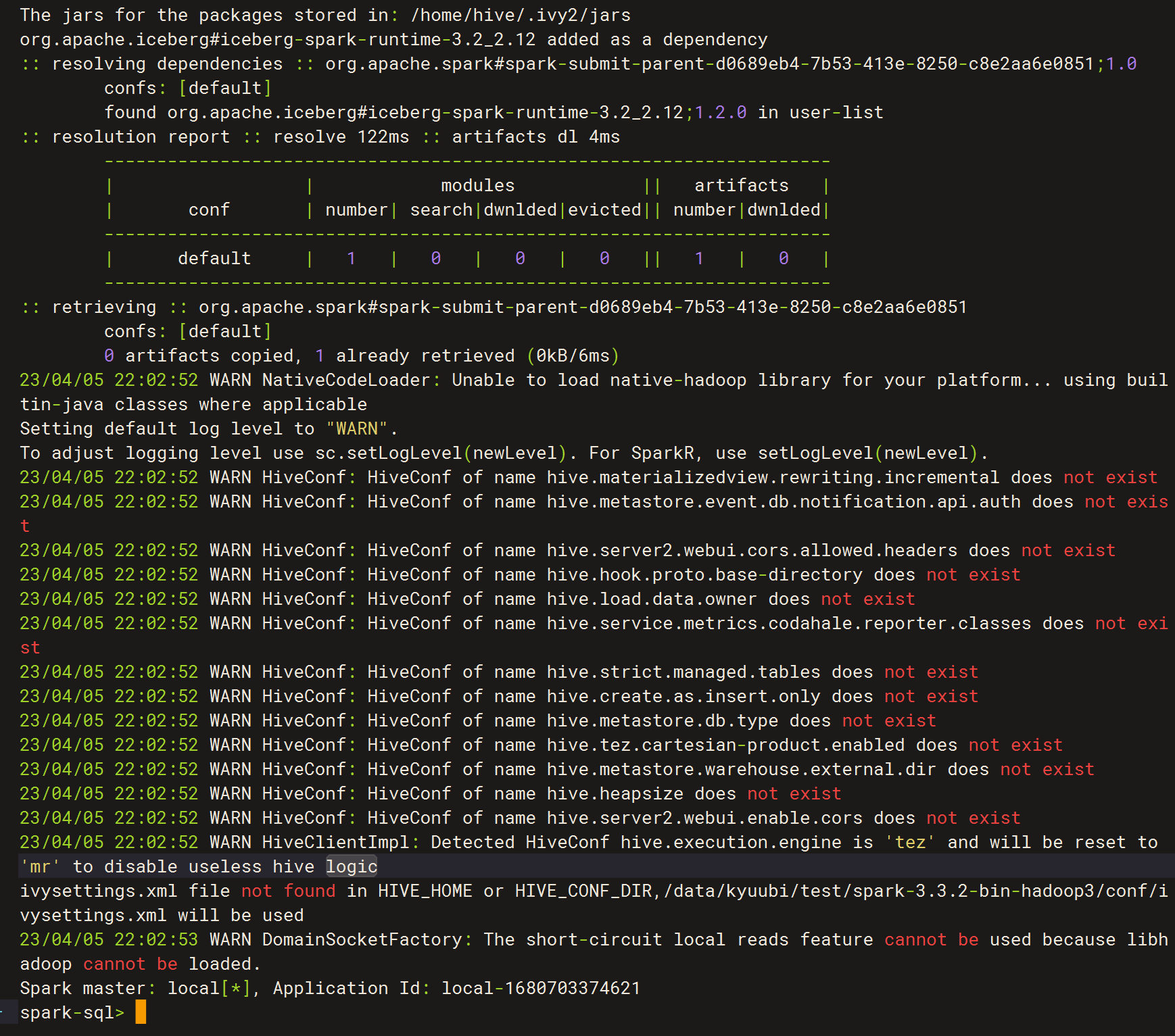

### Query engine spark = "3.3.2" iceberg = "1.2.0" ### Question step 1: execute cmd ``` bin/spark-sql --packages org.apache.iceberg:iceberg-spar.2_2.12:1.2.0 --conf spark.sql.catalog.local=org.apache.iceberg.spark.SparkCatalog --conf spark.sql.catalog.local.type=hadoop --conf spark.sql.catalog.local.warehouse=/iceberg-catalog/warehouse --conf spark.sql.extensions=org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions ```  step2: execute spark-sql script ``` CALL local.system.rewrite_data_files(table => 'default.iceberg_test', strategy => 'sort', sort_order => 'zorder(id,data)'); ``` then i got an error: ``` 23/04/05 21:57:34 ERROR SparkSQLDriver: Failed in [CALL system.rewrite_data_files(table => 'default.iceberg_test', strategy => 'sort', sort_order => 'zorder(id,data)')] 23/04/05 21:57:34 ERROR SparkSQLDriver: Failed in [CALL system.rewrite_data_files(table => 'default.iceberg_test', strategy => 'sort', sort_order => 'zorder(id,data)')] java.lang.NoSuchMethodError: org.apache.spark.sql.catalyst.trees.Origin.<init>(Lscala/Option;Lscala/Option;)V at org.apache.spark.sql.catalyst.parser.extensions.IcebergParserUtils$.position(IcebergSqlExtensionsAstBuilder.scala:334) at org.apache.spark.sql.catalyst.parser.extensions.IcebergParserUtils$.withOrigin(IcebergSqlExtensionsAstBuilder.scala:324) at org.apache.spark.sql.catalyst.parser.extensions.IcebergSqlExtensionsAstBuilder.visitSingleStatement(IcebergSqlExtensionsAstBuilder.scala:291) at org.apache.spark.sql.catalyst.parser.extensions.IcebergSqlExtensionsAstBuilder.visitSingleStatement(IcebergSqlExtensionsAstBuilder.scala:63) at org.apache.spark.sql.catalyst.parser.extensions.IcebergSqlExtensionsParser$SingleStatementContext.accept(IcebergSqlExtensionsParser.java:152) at org.antlr.v4.runtime.tree.AbstractParseTreeVisitor.visit(AbstractParseTreeVisitor.java:18) at org.apache.spark.sql.catalyst.parser.extensions.IcebergSparkSqlExtensionsParser.$anonfun$parsePlan$1(IcebergSparkSqlExtensionsParser.scala:131) at org.apache.spark.sql.catalyst.parser.extensions.IcebergSparkSqlExtensionsParser.parse(IcebergSparkSqlExtensionsParser.scala:233) at org.apache.spark.sql.catalyst.parser.extensions.IcebergSparkSqlExtensionsParser.parsePlan(IcebergSparkSqlExtensionsParser.scala:131) at org.apache.spark.sql.SparkSession.$anonfun$sql$2(SparkSession.scala:620) at org.apache.spark.sql.catalyst.QueryPlanningTracker.measurePhase(QueryPlanningTracker.scala:111) at org.apache.spark.sql.SparkSession.$anonfun$sql$1(SparkSession.scala:620) at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:779) at org.apache.spark.sql.SparkSession.sql(SparkSession.scala:617) at org.apache.spark.sql.SQLContext.sql(SQLContext.scala:651) at org.apache.spark.sql.hive.thriftserver.SparkSQLDriver.run(SparkSQLDriver.scala:67) at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.processCmd(SparkSQLCLIDriver.scala:384) at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.$anonfun$processLine$1(SparkSQLCLIDriver.scala:504) at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.$anonfun$processLine$1$adapted(SparkSQLCLIDriver.scala:498) at scala.collection.Iterator.foreach(Iterator.scala:943) at scala.collection.Iterator.foreach$(Iterator.scala:943) at scala.collection.AbstractIterator.foreach(Iterator.scala:1431) at scala.collection.IterableLike.foreach(IterableLike.scala:74) at scala.collection.IterableLike.foreach$(IterableLike.scala:73) at scala.collection.AbstractIterable.foreach(Iterable.scala:56) at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.processLine(SparkSQLCLIDriver.scala:498) at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver$.main(SparkSQLCLIDriver.scala:286) at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.main(SparkSQLCLIDriver.scala) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:498) at org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52) at org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:958) at org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:180) at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:203) at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:90) at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1046) at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:1055) at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala) ```  -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected] --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]